AI Enthusiasm at Yunqi Conference

At the end of September, a light rain fell in Hangzhou, but the AI fervor at Yunqi Town made it feel like summer had not yet faded.

On September 24, the 2025 Yunqi Conference was held as scheduled. During the event, Alibaba Group CEO and Chairman of Alibaba Cloud Intelligence, Wu Yongming, delivered a speech titled “The Path to Super Artificial Intelligence.”

This was Wu’s first appearance at the Yunqi Conference since taking over Alibaba Cloud over a year ago. He stated, “The greatest imagination of generative AI is not to create one or two new super apps on a mobile screen, but to take over the digital world and change the physical world.”

A year later, this vision has transformed into a more concrete roadmap and aggressive actions.

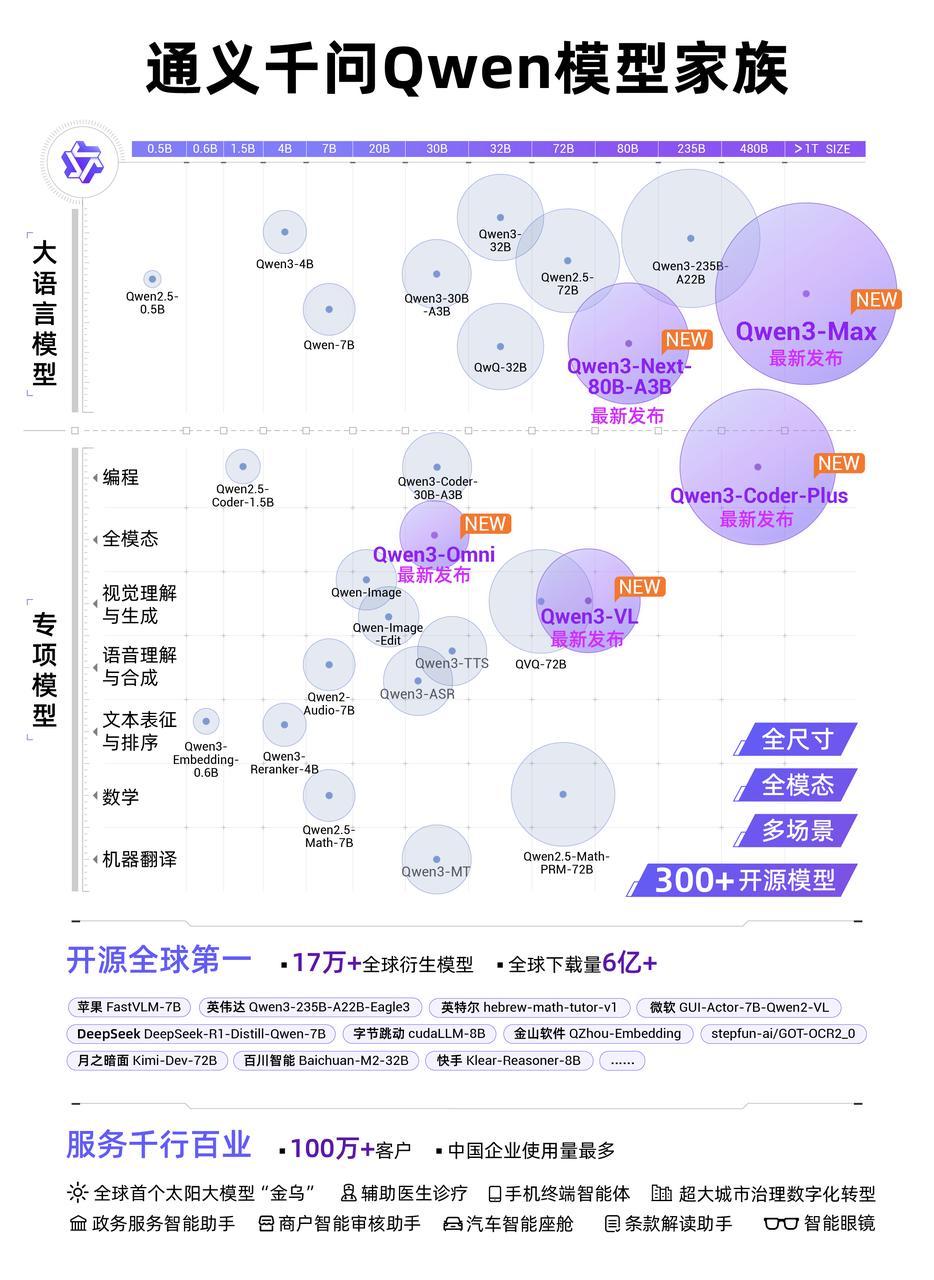

At this year’s Yunqi Conference, Alibaba Cloud introduced a plethora of new products. Among them was the newly launched flagship model Qwen3-Max, which is currently the top-performing model in Alibaba’s Tongyi family, surpassing GPT-5 and Claude Opus 4, ranking among the top three globally on LMArena.

In addition to the flagship model, Alibaba also released six new models, including: the next-generation foundational model architecture Qwen3-Next and its series, the Qwen3-Coder programming model, the Qwen3-VL visual understanding model, the Qwen3-Omni multimodal model, the Wan2.5-preview visual foundational model, and the Tongyi Bailing speech model.

More noteworthy were Wu Yongming’s two bold new assertions.

The first assertion is that large models are the next-generation operating system. Large models will consume software, allowing anyone to create an infinite number of applications using natural language. In the future, almost all software interacting with the computing world may be generated by agents from large models, rather than traditional commercial software.

Consequently, Alibaba Cloud has been undergoing a comprehensive reconstruction of all operating systems—from foundational computing power to infrastructure and cloud layers—to align with the changes brought about by large models.

The second assertion, built on this logic, is that super AI cloud is the next-generation computer. Analogous to the development stages of computers, natural language serves as the programming language of the AI era, agents are the new software, context is the new memory, and LLMs will act as the intermediary layer facilitating user, software, and AI computing resource interactions, becoming the OS of the AI era.

Alibaba Cloud’s goal is to establish a “super AI cloud” to provide a global intelligent computing network.

In February of this year, Alibaba proposed a three-year, 380 billion AI infrastructure construction plan. Wu Yongming added a new plan today—by 2032, compared to 2022, the energy consumption scale of Alibaba Cloud’s global data centers will increase tenfold in anticipation of the arrival of the ASI era.

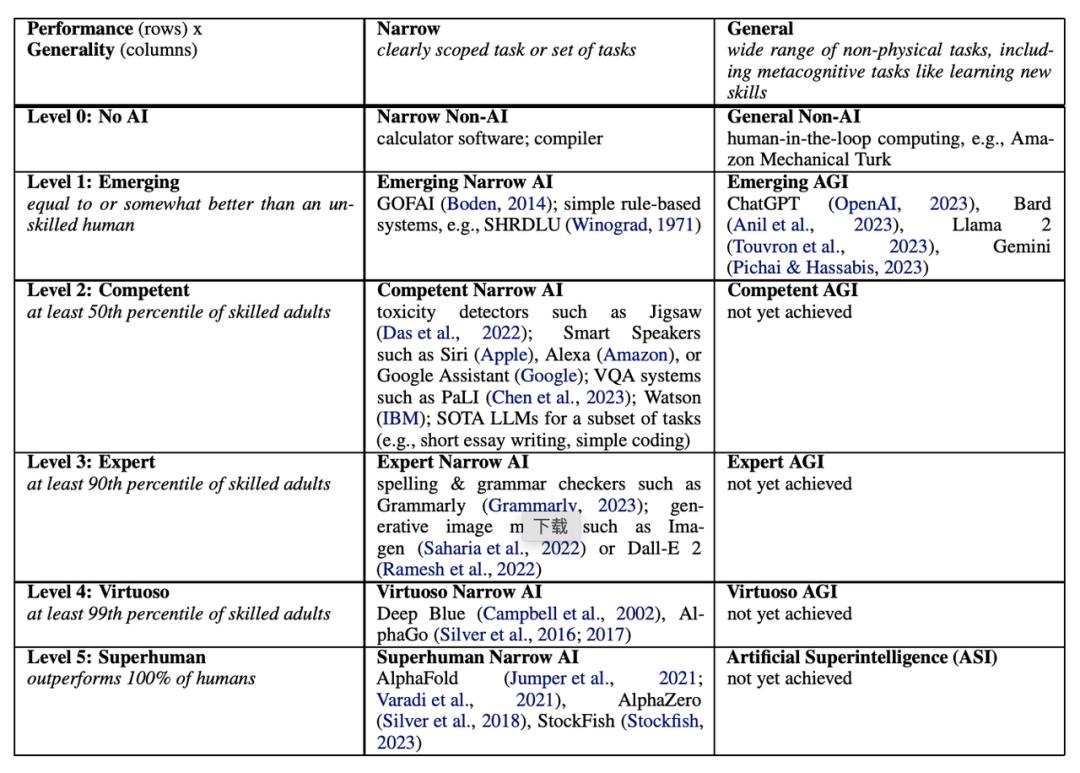

Alibaba Cloud also introduced its AI development strategy and goals: not the AGI (Artificial General Intelligence) that has been widely discussed in the past, but a further step towards ASI (Artificial Super Intelligence).

Wu Yongming elaborated on the three stages leading to super artificial intelligence:

- “Intelligent Emergence”—AI learns from humans, accumulating global knowledge and gradually developing reasoning abilities;

- “Autonomous Action”—AI masters tool usage and programming capabilities to assist humans, which is the current stage of the industry;

- “Self-Iteration”—AI connects with the physical world’s complete raw data for autonomous learning, ultimately able to “surpass humans.”

In 2025, the global large model field is advancing amidst challenges. Following the release of GPT-5, OpenAI faced criticism for falling short of market expectations, with comments about stagnation and obstacles in model innovation. Meanwhile, Meta and OpenAI are making more aggressive capital investments—no one wants to miss out on this wave of technological revolution.

Now, Alibaba Cloud is proving through action that it not only intends to invest but to invest aggressively.

The market has responded enthusiastically to Alibaba Cloud’s new strategy. Today, Alibaba’s stock surged over 9%, reaching its highest point since October 2021.

Seven Model Launches and Saturated Investment

Before the Yunqi Conference, Lin Junyang, head of Alibaba’s Qwen model team, teased on Twitter that they would release more than six new products, and none would be “small things.”

When the models were officially announced, the number exceeded expectations, showcasing a significant commitment. Alibaba Cloud’s CTO, Zhou Jingren, flipped through slides rapidly during his presentation, racing against time yet still exceeding his allotted time.

Alibaba Cloud launched a total of seven brand new models, each with significant improvements in scale and performance:

- Qwen3-Max: Flagship model with a pre-training data volume of 36 trillion tokens and over one trillion parameters, significantly enhancing coding and agent tool calling capabilities;

- Qwen-Next: Next-generation model architecture and series. The model has a total of 80 billion parameters, activating only 3 billion to match the flagship Qwen3 model’s 235 billion parameters. Training costs have dropped by over 90% compared to the dense model Qwen3-32B;

- Qwen 3-VL (Visual Understanding): Not only accurately interprets images and charts, but also features innovative “visual programming” capabilities, converting visual design drafts directly into front-end code and operating mobile devices and computers, progressing from “seeing” to understanding and execution;

- Qwen3-Coder (Code Model): Significantly enhances generation speed, code quality, and security, making it easier to complete complex tasks from code completion and bug fixing to generating complete projects with one click;

- Qwen3-Omni: A native multimodal model that can “hear, speak, see, and write”; it interacts as naturally as chatting with a person, understanding audio and video while maintaining text and image capabilities, suitable for embedded AI in vehicles, glasses, and mobile phones;

- Tongyi Wanxiang Wan2.5-preview: A new visual foundational model with capabilities for generating videos from text, images from text, and editing images, able to produce matching human voices, sound effects, and music BGM;

- Tongyi Bailing: A new family of speech models, including speech recognition and synthesis sub-models. For example, Fun-CosyVoice offers hundreds of preset voice tones for use in customer service, sales, live e-commerce, consumer electronics, audiobooks, and children’s entertainment.

Alibaba Cloud does not rely solely on static datasets to validate model capabilities. In blind tests on authoritative rankings like LMArena, Alibaba’s flagship model Qwen3-Max’s preview version has already ranked third on the Chatbot Arena leaderboard.

After DeepSeek ignited the global AI industry, it also sparked a domestic open-source model competition, contrasting sharply with last year’s closed-door development.

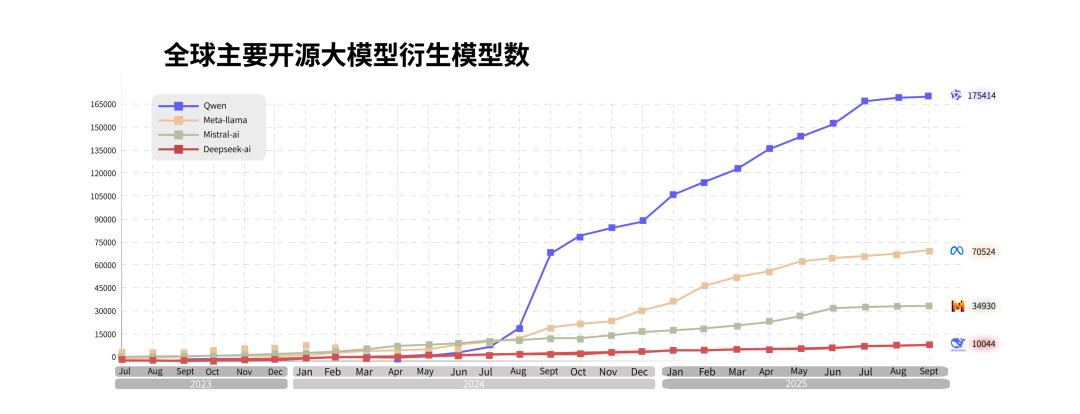

Both domestically and internationally, this year has seen a fierce open-source model battle, with nearly all companies still investing in models increasing their open-source efforts. Alibaba stands out as the most aggressive among domestic giants in pursuing an open-source route.

This stems in part from Alibaba being one of the first companies in China to open-source models and build a model ecosystem. These investments have now yielded tangible returns, motivating Alibaba to make even more aggressive investments.

DeepSeek and Qwen are among the few models that have gained international recognition. Following the open-source surge initiated by DeepSeek, Qwen has once again attracted attention in the global AI community, leading to a new wave of growth.

As of now, Alibaba Tongyi has open-sourced over 300 models, covering various sizes of “full-size” and LLMs, programming, image, speech, and video across “all modalities.”

In the global context, Tongyi’s large model is also the number one in open-source models, with over 600 million downloads and more than 170,000 derivative models worldwide.

In addition to models, Alibaba Cloud also released a new Agent development framework, ModelStudio-ADK—agents can autonomously plan and call models, leading to increased computing power consumption. Alibaba Cloud disclosed a figure indicating that as model capabilities have improved and agent applications have surged, the daily call volume on Alibaba Cloud’s Bailian platform has increased fifteenfold over the past year.

Investments in open-source models not only accelerate model iteration but have also translated into revenue on the cloud. Alibaba has begun to establish a commercial closed loop for the AI era—its latest quarterly report shows that Alibaba Cloud’s quarterly revenue has surged 26% year-on-year, with AI-related revenue achieving triple-digit growth for eight consecutive quarters.

According to a report by the international market research firm Omdia, the Chinese AI cloud market is expected to reach 22.3 billion yuan in the first half of 2025, with Alibaba Cloud holding a 35.8% market share, ranking first, surpassing the combined market share of the second to fourth places.

“Becoming Android of the LLM Era”

In 2024, with OpenAI’s Sora release and GPT-5 development stagnating, discussions about technical routes briefly led to a dip in sentiment in the global large model field.

However, this sentiment has largely dissipated. Just days before the Yunqi Conference, NVIDIA announced a $100 billion investment in OpenAI. Wu Yongming predicted at the conference that global AI investments in the next five years will exceed $4 trillion.

Alibaba Cloud’s CTO Zhou Jingren admitted in a media interview after the Yunqi Conference that there are now few major disagreements on technical routes across the industry. Almost all companies globally are aggressively investing in AI competition and rapidly releasing models. The question now is how each vendor approaches this.

“The current model competition is a competition between systems,” Zhou Jingren said. “Innovation in model development does not involve holding back major breakthroughs; it is complementary to the foundational infrastructure and cloud.”

How to understand ‘system’? This likely points more towards a strategic choice in AI.

After DeepSeek changed the global AI narrative, all major players have increased their investments in AI, from foundational computing power to cloud computing and open-source initiatives.

The AI route distinctions among major companies have formed an interesting contrast—take Tencent’s recent ecological conference as an example, where Tencent focused more on scenarios and B-end and C-end implementations, first applying AI to its own business before turning outward; ByteDance, on the other hand, resembles iOS, adopting a legion-style approach from models to applications, but tends to keep its best versions closed-source initially, with a slower pace for open-sourcing.

2023 marks a critical juncture for Alibaba Cloud. After Wu Yongming took over as CEO of Alibaba Cloud, he proposed a strategy of “AI-driven, public cloud-first.”

Since then, Alibaba Cloud has accomplished several key tasks: first, it returned to public cloud, cutting low-profit projects; then, it allocated a substantial budget to AI, investing not only in AI startups but also heavily in self-developed models, open-source efforts, and infrastructure reconstruction.

Alibaba Cloud’s current approach is closer to that of Google. From foundational computing infrastructure to cloud computing and then to models, both Alibaba and Google adopt a full-stack self-research and self-construction strategy, ensuring that each layer is internationally leading.

The ASI proposed by Alibaba today is not a new term. In March of this year, Google DeepMind revealed its “AGI Six-Level Roadmap,” which bears significant similarities to Alibaba’s ASI trilogy: the third stage of ASI, “surpassing humans,” closely resembles DeepMind’s definition of AGI Level 6.

Aggressive investments in AI stem from the inseparable relationship between AI and cloud computing. Alibaba Cloud even announced a new positioning as a “full-stack AI service provider.” “Tokens are the electricity of the future AI world,” Wu Yongming stated.

Undoubtedly, we are still in the early stages of the AI era. Currently, the volume of model calls constitutes a small fraction of enterprise cloud consumption, but trends are crucial.

In post-conference interviews, Xu Dong, General Manager of Alibaba Cloud’s Tongyi large model business, told the media that a year ago, most model calls were for offline tasks like data labeling; however, a year later, online task calls have seen a tenfold increase, with enterprises across various industries embedding large models into their production processes—this proves that large models are rapidly bringing incremental growth to the cloud market.

For the past 16 years, providing the “water and electricity” of the digital world has long been Alibaba Cloud’s explanation of its market value—this is consistent with Alibaba Cloud’s current call for being the “Android of the LLM era,” aiming to find its place in the AI era and secure a leading position before the application market explodes, a goal that has never been clearer.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.